Designing Gifted Identification with Purpose

Identifying students who need gifted educational programming requires schools to balance accuracy, fairness, and feasibility. In practice, gifted identification systems must operate within limited testing windows, staffing constraints, and budget realities, while also meeting growing expectations for fairness and transparency. These pressures make it essential that identification models are not only research-based but also practical to implement at scale.

Decades of research show that narrow identification approaches, such as reliance on a single test or rigid criteria, can overlook many capable learners, particularly those from historically underserved groups¹. As a result, best practice in gifted education emphasizes the use of accessible assessments, multiple measures, and clearly defined decision rules2,3. When these elements are thoughtfully aligned, identification systems are more defensible, inclusive, and better connected to the services students ultimately receive⁴.

From Assessment Design to Identification Decisions

The Naglieri General Ability Tests™ (Naglieri—Verbal, Naglieri—Quantitative, and Naglieri—Nonverbal) were designed to support these goals. By focusing on ability rather than acquired knowledge, minimizing language demands, and using animated instructions, these assessments reduce common barriers to access. The assessment includes three distinct measures of general ability—verbal, quantitative, and nonverbal—which may be used individually or in any combination, allowing districts to tailor identification systems to their definitions of giftedness and service models.

The following analysis examines how the Naglieri General Ability Tests can be applied within different gifted identification frameworks. Specifically, we focus on two design choices that shape identification outcomes using a cutoff score of 95th percentile:

- the type of norms used and the local context for norming

- how multiple measures are combined

Understanding these choices—and how they interact—can help districts design systems that are equitable, transparent, and sustainable.

Choosing the type of norms: National or Local?

The choice of norms is a foundational decision in gifted identification because it directly affects who is identified and how the results are interpreted. National norms compare each student’s score to a nationally representative normative sample of peers to determine whether a score is above or below average compared to typical students nationwide. National norms provide an external benchmark that supports consistency and comparability across districts over time. For some systems, this stability is appealing, particularly when alignment with external expectations is a priority. However, national norms do not account for regional or localized differences in students’ educational environments. Because schools are often shaped by neighborhood factors, national comparisons can result in high identification rates in some districts or schools and very low rates in others. This pattern can leave advanced learners in lower-performing contexts under-identified.

Local norms address this issue by comparing students to peers within a shared context, such as a district or school. This approach is more sensitive to opportunity differences and often surfaces students who would benefit from additional challenge in every district or school building. The trade-offs include reduced cross-site comparability, the need for universal screening which can create logistical challenges, and greater importance on clear communication with stakeholders to interpret results in the local context. Many districts follow best practice recommendations by documenting a clear rationale for their norming approach and periodically review outcomes to ensure alignment with program goals.

The choice of norms is a foundational decision in gifted identification because it directly affects who is identified and how results are interpreted.

What national norms do

- Compare students to a nationally representative peer group

- Indicate whether scores are above or below average nationwide

- Support consistency and comparability across districts and time

What national norms may miss

- Regional and local differences in educational opportunity

- Contextual factors that shape school performance

- Advanced learners in lower-performing settings who may be overlooked

Local norms compare students to peers within a shared context, such as a district or school.

When local norms are used:

- Identification is more sensitive to opportunity differences

- Students who may benefit from additional challenge are identified in every district or school building

However:

- Comparability across sites is reduced

- Universal screening may be required, creating logistical considerations

- Clear communication with stakeholders becomes essential

Many districts follow best practices by documenting their rationale for local norming and reviewing outcomes over time to ensure alignment with program goals.

Defining the Local Context

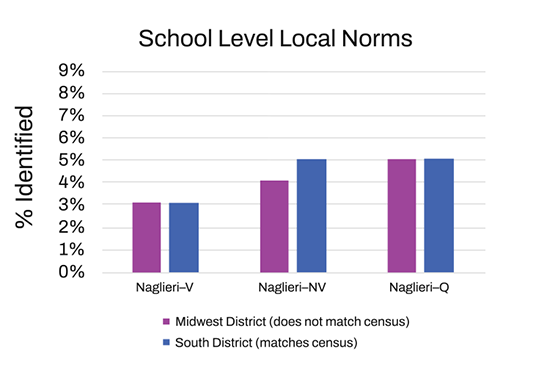

When local norms are used, districts must decide how broadly to define the comparison group.

District-level norms typically offer larger samples and greater year-to-year stability, which can simplify implementation and messaging. However, this level of norming may be less sensitive to students who stand out within their individual schools.

School-level norms maximize contextual sensitivity by identifying students relative to peers in the same building. This can support equity goals by ensuring that advanced learners are identified in every school, but it may introduce variability in cut scores and require more explanation for families and educators.

Some districts explore hybrid approaches, such as examining both district- and school-referenced indicators or grouping schools within a district with similar contexts (for example, Title I and non-Title I schools). Regardless of the approach, documenting the rationale and monitoring outcomes over time is critical for transparency and defensibility.

Combining Multiple Measures

Using multiple measures strengthens gifted identification, but the rules used to combine those measures have a substantial impact on outcomes. Decision rules should be defined after careful examination of the scores and aligned with program intent.

- An OR rule allows students to qualify by meeting the cutoff on any measure. This approach increases sensitivity and can surface a more diverse group of learners, though it may also increase the likelihood of false positives and the size of the identified population.

- An AND rule requires students to meet cutoffs on all selected measures. This approach typically yields a smaller, more homogeneous group and reduces the likelihood of false positives, but it can miss students with domain-specific strengths.

- A 2-of-3 rule requires students to meet the cutoff on any two of the three measures, positioning it between OR and AND rules in terms of restrictiveness. This approach can identify students with strong but not uniformly elevated profiles, reducing the likelihood of exclusion due to a single lower score while maintaining more stringent criteria than an OR rule.

- An AVERAGE rule balances scores across measures, reducing the influence of extreme highs or lows. While this approach can stabilize decisions, it may also mask exceptional strengths in a single domain. Some tests refer to this as a TOTAL score. The Naglieri General Ability Tests, however, provides a TOTAL score that accounts for the average of two or three test scores as well as incorporates how uncommon it may be to score highly across all three tests.

Each rule reflects a different philosophy of giftedness and should align with the services a district is prepared to provide.

Setting Cutoff Scores

Cutoff scores translate assessment results into placement decisions. More selective cutoffs produce smaller groups and may simplify service delivery, while broader cutoffs increase access and reduce the risk of overlooking capable students. Some schools use a specific cutoff as a threshold for other testing or decision-making, while others rely on a rubric with specific cutoffs on some or all of the administered tests. Importantly, cutoff effects also depend on other design choices: the same percentile cutoff can yield very different outcomes depending on the norm group and decision rule used. Best practice involves piloting cutoffs with different design choices, examining identification patterns and desired outcomes, and aligning identification rates with service capacity.

To illustrate how these design choices interact in practice, we examined identification outcomes across different norming approaches and combination rules, while maintaining the same cutoff score (95th percentile), using recent, real-world test results from students in the U.S.

Illustrating the Impact of Different Design Choices

National vs Local Norms

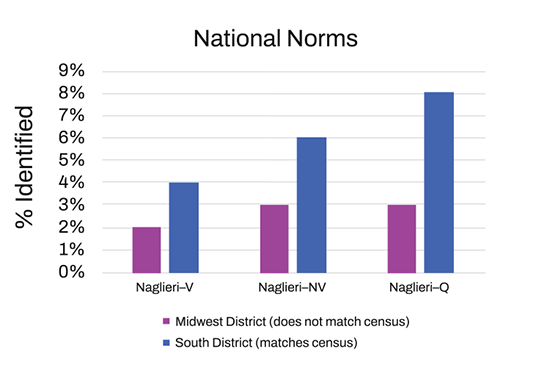

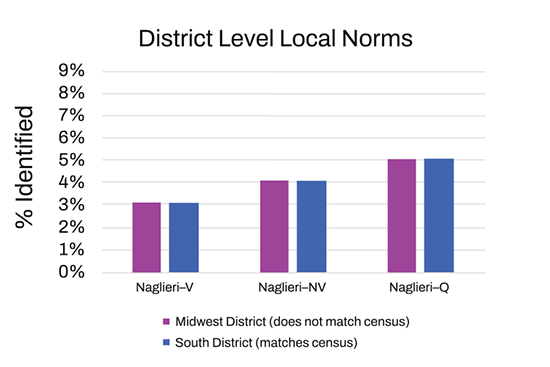

We first examined how norm selection (national versus local) influenced identification rates in districts that either closely matched or diverged from national census data. Local norms were calculated at both the district and school levels.

Test scores were analyzed for students from two districts who completed all three of the Naglieri General Ability Tests between 2023 and 2025. One district in the Midwest included 4,711 students in Kindergarten and Grade 5 and did not closely align with the U.S. census demographic group proportions (an average difference of 12%). The other district from the South included 2,603 students in Kindergarten, Grade 2, and Grade 4 and closely matched the U.S. census population in terms of key demographic groups (an average difference of 3%).

Figure 1 shows the percentage of students identified as gifted within each school district, comparing age-based normative scores⁵ (coming soon) at a national, district-level, and school-level with a 95th percentile cutoff using only one Naglieri General Ability Test at a time.

Figure 1. Identification Rate by District: Single Test

Looking at these results, differences emerge between the district that matches the U.S. census demographics (i.e., the district in the South) and the district that diverges more from the census (i.e., the district in the Midwest). More students in the South district would be identified as gifted using national norms, as this district has higher identification rates. These results mirror past research showing that when using national norms, districts that more closely match the U.S. census in terms of demographic representation (e.g., gender, race/ethnicity) tend to identify more gifted learners than those that do not⁶. In the Midwest district, 1-2% more students would be identified using local norms compared to national norms. Additionally, identification rates were similar when comparing district-level and school-level local norms in both districts. This is because local norms compare students only to peers in their immediate setting, leading to similar results across different demographic groups, unlike national norms, which compare students to a much broader population.

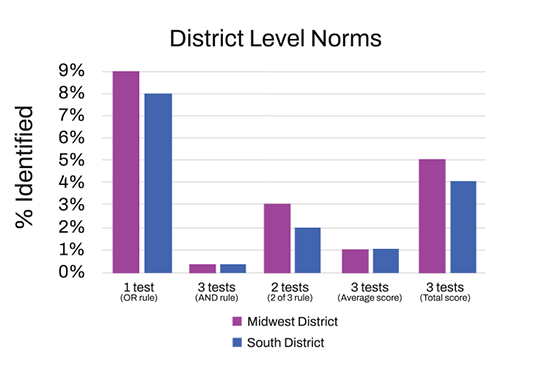

Test Combinations

Next, we examined variations in how multiple tests can be combined, keeping the same 95th percentile cutoff. Specifically, in Figure 2, we compared identification rates under:

- an OR rule, which allows qualification based on meeting the cutoff on any single measure;

- an AND rule, which requires students to meet the cutoff on all three measures;

- a 2-of-3 rule, which requires students to meet the cutoff on 2 of the 3 tests;

- an AVERAGE score approach, which is the mathematical average of the three test scores; and

- a TOTAL score approach, which is unique to the Naglieri General Ability Tests and offers an average score across all tests that accounts for the relatedness of the three tests.

Identification rates across these combination rules were examined to evaluate how alternative decision frameworks shaped the size of the identified student using district-level norms as an illustrative example. Findings for the district-level local norms are presented in Figure 2 for both districts.

Figure 2. Identification Rate by District-level: Multi-Test Combinations Results

Identification rates varied substantially depending on how test scores were combined, highlighting the importance of aligning decision rules with program goals and service capacity.

OR rule

- Produced the highest identification rates across both districts (up to 9%)

- Casts a wider net by allowing students to qualify based on strength in any single measure

- May exceed the capacity of programs designed to serve a limited proportion of students

- Can be appropriate for:

-

- Identifying a smaller group for additional testing

-

- Tiered service models where broader access is supported (e.g., differentiated instruction, as recommended by the National Association for Gifted Children)7

AND 2-of-3 rules

- Yielded substantially lower identification rates across districts and norm options

-

- AND rule: near 0–1%

-

- 2-of-3 rule: up to 3%

- Require high performance across multiple measures, making them more restrictive

- Lower identification rates likely reflect the difficulty of scoring uniformly high across tests that measure general ability using different content and formats—not a lack of ability

- May require a lower cutoff score if broader identification is desired

AVERAGE and TOTAL score approaches

- Aggregate performance across all three tests

- AVERAGE rule: produced consistently low identification rates (approximately 1%)

- TOTAL score: yielded identification rates closer to a hypothetical 5% benchmark

- TOTAL scores account for the relative rarity of consistently high performance across multiple measures of general ability

Together, these findings illustrate that different combination rules support different identification priorities, and none is inherently superior; instead, each reflects a distinct balance between inclusivity, precision, and program capacity.

From Design to Practice

Gifted identification is not defined by a single choice but by how multiple decisions work together. The Naglieri General Ability Tests provide districts with three distinct measures of general ability, offering flexible, research-based tools that can be configured to match local context, program goals, and instructional capacity. Districts can choose to apply national or local norms at different levels (e.g., district, subdistrict, or school), use individual test scores, or a total score, and apply different combination rules across the three tests. Together, these options give users substantial flexibility to design identification systems that align with their values, student populations, and available resources.

By intentionally selecting norms, defining the comparison context, choosing appropriate decision rules, and setting defensible cutoffs, educators can build identification systems that are transparent, equitable, and closely aligned with the services they offer. When these elements are revisited and refined over time, identification becomes a meaningful gateway to opportunity rather than a barrier. Importantly, no single configuration is universally “best”—the most effective systems are those that align technical decisions with clearly defined program goals and service capacity.

Have questions? Get in touch with a member of our team.

References

¹ American Educational Research Association, American Psychological Association, & National Council on Measurement in Education. (2014). Standards for educational and psychological testing. American Educational Research Association.

² Johnsen, S. K., Simonds, M., & Voss, M. (2021). Implementing evidence based practices in gifted education: Professional learning modules on universal screening, grouping, acceleration, and equity in gifted programs (1st ed.). Routledge. https://doi.org/10.4324/9781003235729

3,4 Silverman, L. K., & Gilman, B. J. (2020). Best practices in gifted identification and assessment: Lessons from the WISC V. Psychology in the Schools, 57(10), 1569–1581. https://doi.org/10.1002/pits.22361

⁵ Age-based normative scores are based on a sample of 19,000 general population students from the U.S. in 2022-2025

⁶ Peters S. J., Rambo-Hernandez, K., Makel, M. C., Matthews, M. S., & Plucker, J. A. (2019). The effect of local norms on racial and ethnic representation in gifted education. AERA Open, 5(2), 1–18. https://doi.org/10.1177/2332858419848446

7 National Association for Gifted Children. (2026, January 1). Challenging gifted and advanced learners through differentiated curriculum and instructional strategies. https://www.nagc.org/news/challenging-gifted-and-advanced-learners-through-differentiated-curriculum–instructional-strategies